By Marcello Cherchi, MD PhD

Artificial intelligence (AI)

Credit is usually given to John McCarthy (1927 – 2011) for having coined the term “artificial intelligence” (AI) in a workshop at Dartmouth in 1955 held with Marvin Minsky, Nathaniel Rochester and Claude Shannon (McCarthy et al. 1955).

The definition of artificial intelligence (AI) is debated, but generally taken to refer to the ability of computers to perform tasks that normally require human cognition. Artificial intelligence can be classified in several ways.

A common classification of AI is by its level of capabilities which, in ascending order is:

- Artificial narrow intelligence (ANI), also called “weak AI” or machine learning, specializes in one area and solves one kind of problem.

- Artificial general intelligence (AGI), also called “strong AI” or machine intelligence, refers to a computer program whose problem-solving abilities are equivalent to those of a human (Goertzel 2014; Yamakawa 2021). This probably does not yet exist, though some commentators submit that the advent of platforms for large language models such as ChatGPT (by OpenAI), LLaMa (by Meta), Bard (by Google), Claude (by Anthropic) and others show that it is “already here” (Auguera y Arcas and Norvig 2023).

- Artificial superintelligence (ASI), also called machine consciousness, refers to a computer that is self-aware (sentient) and whose capabilities surpass those of a human (Katritsis 2021). This does not yet exist.

Another classification of AI is by its level of functionality which, in ascending order is:

- Reactive machines. This is the most basic type of AI which, when given a particular input, will always respond with the same specific output. This AI does not change after it has been trained; in other words, it does not “learn” from experience, it cannot adapt or iteratively improve. Real-world examples of reactive machines include IBM’s Deep Blue systems.

- Limited memory machines. This type of AI retains information to which it is exposed. Real-world examples include the systems of self-driving cars that take input from the environment and generate a reaction to that set of circumstances.

- Theory of mind. This kind of AI can understand human emotions and beliefs and respond to situations like a person would — basically, it could pass the Turing test (Turing 1950). This kind of AI does not yet exist.

- Self-aware machines. This kind of AI has human-like feelings, beliefs, awareness, and cognitive abilities superior to humans. This kind of AI does not yet exist.

Machine learning (ML)

Machine learning (ML) is a subtype of artificial intelligence (AI). According to the classifications mentioned earlier, the ML usually applied to medical diagnostic problems is an artificial narrow intelligence (“weak AI”) that is driving either a reactive machine or a limited memory machine.

Origins of ML

Arthur Lee Samuel (1901 – 1990) (McCarthy and Feigenbaum 1990; Weiss 1992) is credited with having defined machine learning (ML) as “the field of study that gives computers the ability to learn without being explicitly programmed,” though this quotation is not found in either of his initial articles on this topic (Samuel 1959, 1967).

Basic function of machine learning

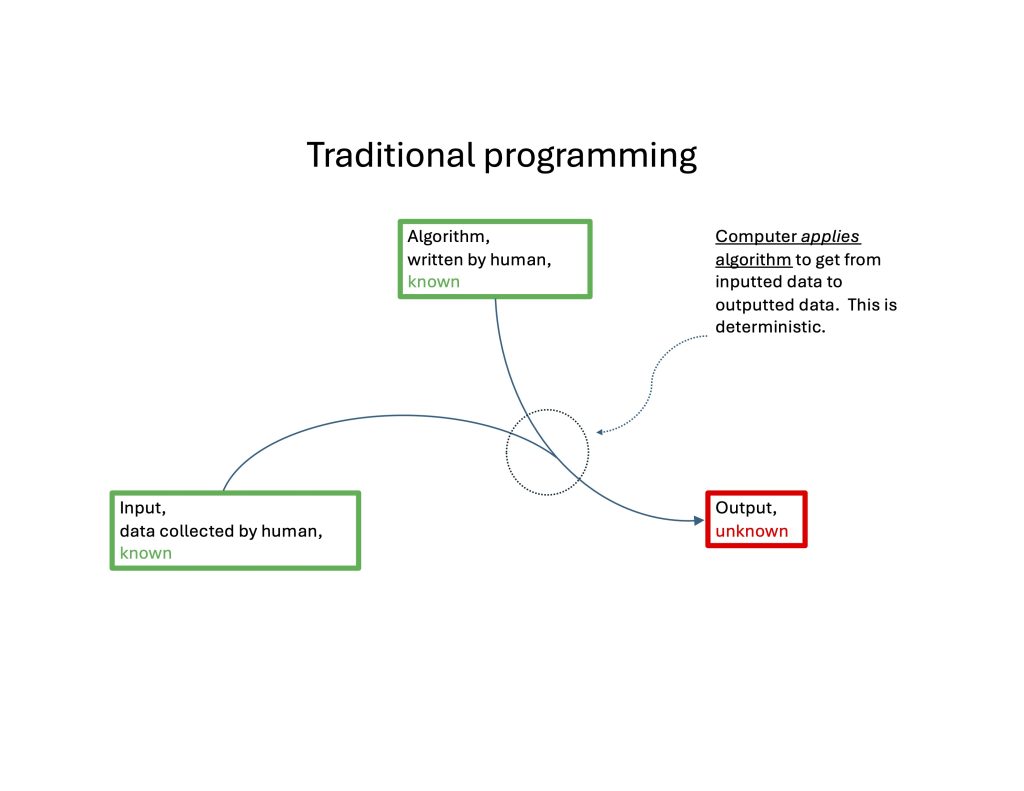

In traditional computer programming, the computer takes known data as input, processes them with known (programmed) rules, and outputs (previously unknown) answers.

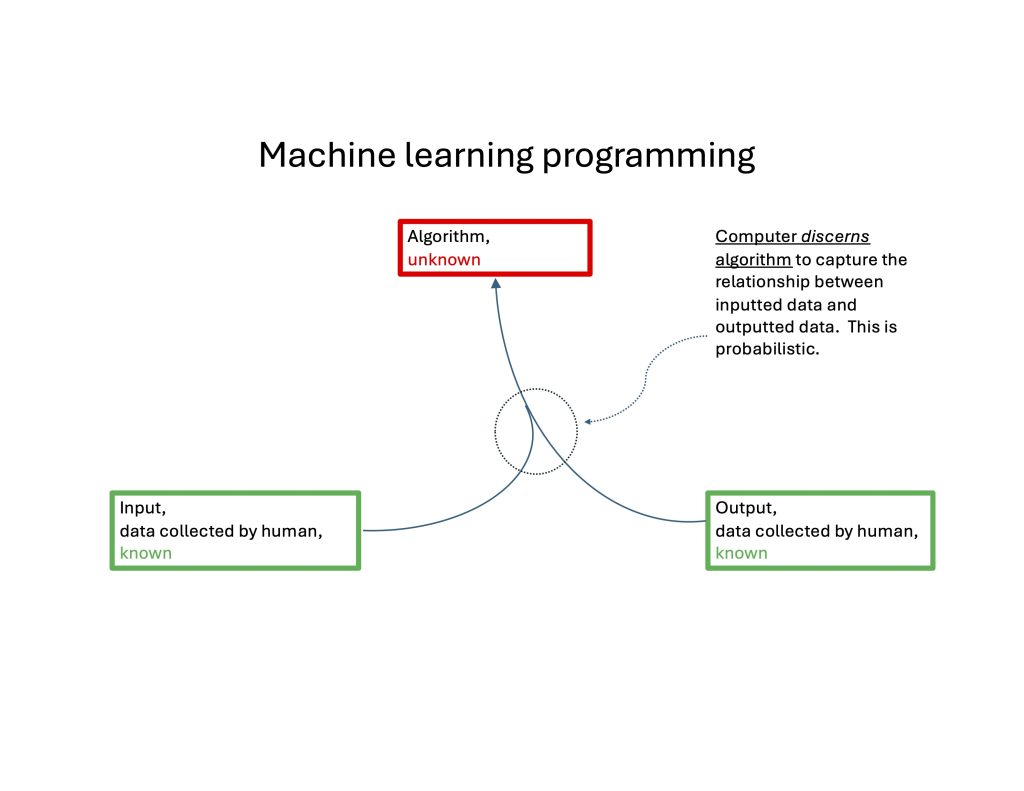

In machine learning, the computer takes known data as input and known answers as input, and outputs (previously unknown) rules that capture the relationship between the data and answers.

The general schemata of “traditional programming” and “machine learning” are compared in the Figures below.

|

Thus, at a basic level, ML maps an input to an output. In engineering terms this is a systems identification problem of determining a transfer function from input to output in which:

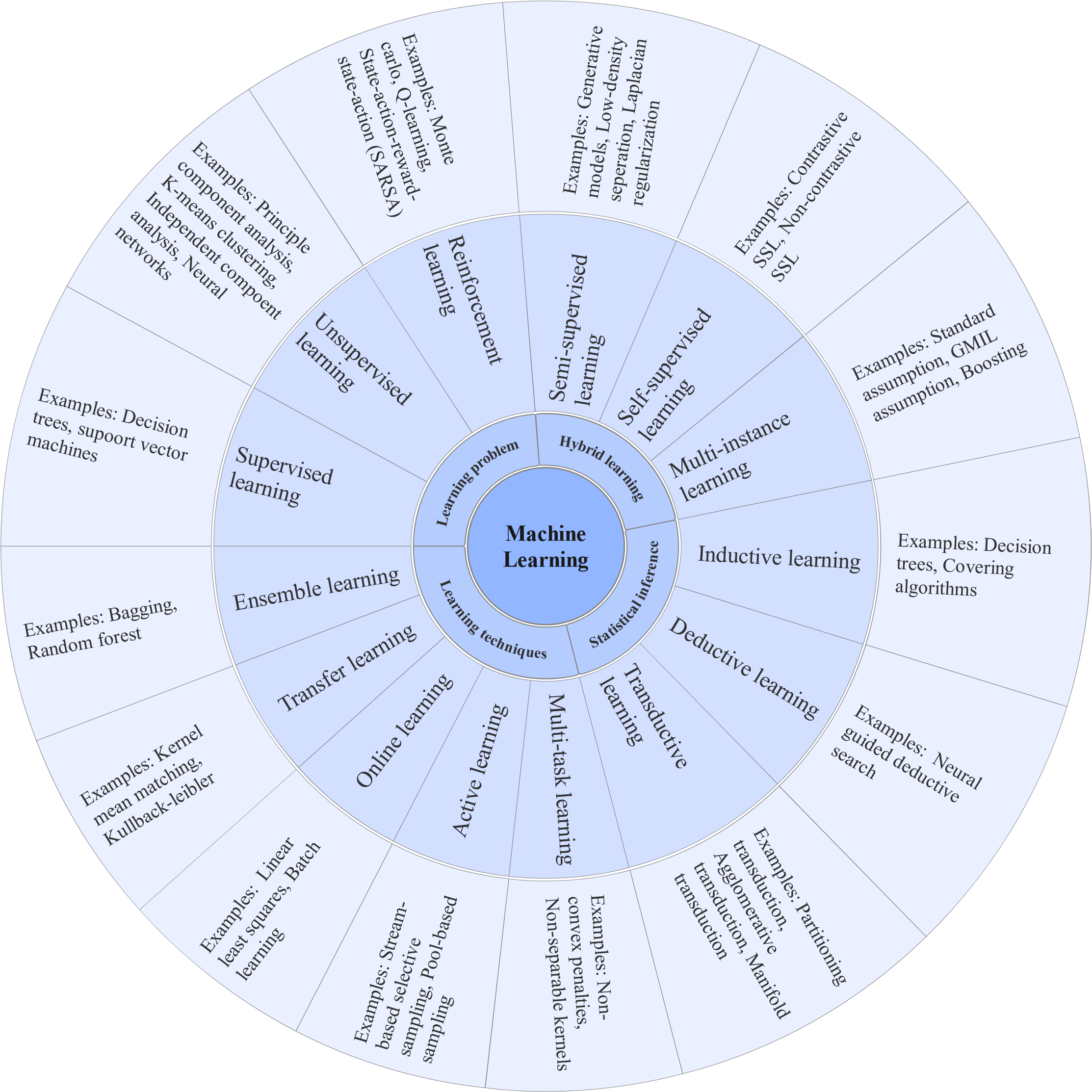

Evaluating machine learningThe performance evaluation of an ML algorithm assesses the quality of this mapping from input to output, and can be measured in a variety of ways, including recall, sensitivity, specificity, F‑measure and receiver operating characteristics (Ahsan et al. 2022). Types of machine learningThere are many kinds of ML. The Figure below, from Ahsan and colleagues (Ahsan et al. 2022), offers a partial taxonomy of ML.  This following outline of machine learning algorithms is adapted from https://www.geeksforgeeks.org/machine-learning/ (accessed 10/8/2023).

Application of machine learning in medicine in generalDr. Scott Gottlieb, the 23rd commissioner of the United States Food and Drug Administration from 2017 – 2019, said in a statement that: “Artificial intelligence and machine learning have the potential to fundamentally transform the delivery of health care. As technology and science advance, we can expect to see earlier disease detection, more accurate diagnosis, more targeted therapies and significant improvements in personalized medicine” (from https://www.fda.gov/news-events/press-announcements/statement-fda-commissioner-scott-gottlieb-md-steps-toward-new-tailored-review-framework-artificial, accessed 11/24/23). The following is a partial list of machine learning algorithms commonly used for medical purposes (Jiang et al. 2017):

From the examples reviewed above we can see that several different machine learning algorithms may be relevant to different problems in a particular topic or field. For example, in healthcare:

Machine learning in otoneurology: What has already been attempted?Many machine learning techniques have found application specifically in otoneurology, including:

Machine learning has also been applied to interpretation of specific otoneurological test results, such as:

Machine learning has also been applied to the problem of identifying specific diagnoses, including:

Final commentsThe authors of an opinion paper published by the National Academy of Medicine stated that “AI will not replace [healthcare] providers, but providers who leverage AI will replace those who do not” (Lomis et al. 2021). The advent of machine learning in medicine is inevitable, so this is no longer a question of whether the profession will integrate it into practice and research, but how. In doing so, medicine will have to confront a variety of questions, including ethical issues (Sood et al. 2022), the impact ML may have on the human interaction (Coiera 2019), and many others. In general, ML may aid clinicians by improving diagnostic accuracy and medical decision making. More specifically, ML may also be useful in clinical fields that are esoteric or deal with rare diseases, or in a field such as otoneurology in which there is a significant mismatch between supply (small number of clinicians who practice) and demand (large number of patients who need care) ReferencesAguera y Arcas B, Norvig P (2023) Artificial general intelligence is already here. Noema. Ahmadi SA, Vivar G, Frei J, Nowoshilow S, Bardins S, Brandt T, Krafczyk S (2019) Towards computerized diagnosis of neurological stance disorders: data mining and machine learning of posturography and sway. J Neurol 266: 108-117. doi: 10.1007/s00415-019-09458-y Ahmadi SA, Vivar G, Navab N, Mohwald K, Maier A, Hadzhikolev H, Brandt T, Grill E, Dieterich M, Jahn K, Zwergal A (2020) Modern machine-learning can support diagnostic differentiation of central and peripheral acute vestibular disorders. J Neurol 267: 143-152. doi: 10.1007/s00415-020-09931-z Ahsan MM, Luna SA, Siddique Z (2022) Machine-Learning-Based Disease Diagnosis: A Comprehensive Review. Healthcare (Basel) 10. doi: 10.3390/healthcare10030541 Allum JH, Ura M, Honegger F, Pfaltz CR (1991) Classification of peripheral and central (pontine infarction) vestibular deficits. Selection of a neuro-otological test battery using discriminant analysis. Acta Otolaryngol 111: 16-26. doi: 10.3109/00016489109137350 Bastanlar Y, Ozuysal M (2014) Introduction to machine learning. Methods Mol Biol 1107: 105-28. doi: 10.1007/978-1-62703-748-8_7 Ben Slama A, Sahli H, Mouelhi A, Marrakchi J, Boukriba S, Trabelsi H, Sayadi M (2020) Hybrid clustering system using Nystagmus parameters discrimination for vestibular disorder diagnosis. J Xray Sci Technol 28: 923-938. doi: 10.3233/XST-200661 Brandt T, Strupp M, Novozhilov S, Krafczyk S (2012) Artificial neural network posturography detects the transition of vestibular neuritis to phobic postural vertigo. J Neurol 259: 182-4. doi: 10.1007/s00415-011-6124-8 Coiera E (2019) The Price of Artificial Intelligence. Yearb Med Inform 28: 14-15. doi: 10.1055/s-0039-1677892 Du Y, Ren L, Liu X, Wu Z (2022) Machine learning method intervention: Determine proper screening tests for vestibular disorders. Auris Nasus Larynx 49: 564-570. doi: 10.1016/j.anl.2021.10.003 Filippopulos FM, Strobl R, Belanovic B, Dunker K, Grill E, Brandt T, Zwergal A, Huppert D (2022) Validation of a comprehensive diagnostic algorithm for patients with acute vertigo and dizziness. Eur J Neurol 29: 3092-3101. doi: 10.1111/ene.15448 Formeister EJ, Baum RT, Sharon JD (2022) Supervised machine learning models for classifying common causes of dizziness. Am J Otolaryngol 43: 103402. doi: 10.1016/j.amjoto.2022.103402 Friedrich MU, Schneider E, Buerklein M, Taeger J, Hartig J, Volkmann J, Peach R, Zeller D (2023) Smartphone video nystagmography using convolutional neural networks: ConVNG. J Neurol 270: 2518-2530. doi: 10.1007/s00415-022-11493-1 Goertzel B (2014) Artificial General Intelligence: Concept, State of the Art, and Future Prospects. Journal of Artificial General Intelligence 5: 1-48. doi: doi:10.2478/jagi-2014-0001 Jiang F, Jiang Y, Zhi H, Dong Y, Li H, Ma S, Wang Y, Dong Q, Shen H, Wang Y (2017) Artificial intelligence in healthcare: past, present and future. Stroke Vasc Neurol 2: 230-243. doi: 10.1136/svn-2017-000101 Juhola M, Viikki K, Laurikkala J, Pyykko I, Kentala E (2001) On classification capability of neural networks: a case study with otoneurological data. Stud Health Technol Inform 84: 474-8. Katritsis DG (2021) Artificial Intelligence, Superintelligence and Intelligence. Arrhythm Electrophysiol Rev 10: 223-224. doi: 10.15420/aer.2021.61 Kentala E, Pyykko I, Auramo Y, Juhola M (1997) Neural networks in neurotologic expert systems. Acta Otolaryngol Suppl 529: 127-9. doi: 10.3109/00016489709124102 Kim BJ, Jang SK, Kim YH, Lee EJ, Chang JY, Kwon SU, Kim JS, Kang DW (2021) Diagnosis of Acute Central Dizziness With Simple Clinical Information Using Machine Learning. Front Neurol 12: 691057. doi: 10.3389/fneur.2021.691057 Kong S, Huang Z, Deng W, Zhan Y, Lv J, Cui Y (2023) Nystagmus patterns classification framework based on deep learning and optical flow. Comput Biol Med 153: 106473. doi: 10.1016/j.compbiomed.2022.106473 Korda A, Wimmer W, Wyss T, Michailidou E, Zamaro E, Wagner F, Caversaccio MD, Mantokoudis G (2022) Artificial intelligence for early stroke diagnosis in acute vestibular syndrome. Front Neurol 13: 919777. doi: 10.3389/fneur.2022.919777 Krafczyk S, Tietze S, Swoboda W, Valkovic P, Brandt T (2006) Artificial neural network: a new diagnostic posturographic tool for disorders of stance. Clin Neurophysiol 117: 1692-8. doi: 10.1016/j.clinph.2006.04.022 Lee Y, Lee S, Han J, Seo YJ, Yang S (2023) A nystagmus extraction system using artificial intelligence for video-nystagmography. Sci Rep 13: 11975. doi: 10.1038/s41598-023-39104-7 Li CC, Zhang ZR, Liu YH, Zhang T, Zhang XT, Wang H, Wang XC (2022) Multi-Dimensional and Objective Assessment of Motion Sickness Susceptibility Based on Machine Learning. Front Neurol 13: 824670. doi: 10.3389/fneur.2022.824670 Li H, Yang Z (2023a) Torsional nystagmus recognition based on deep learning for vertigo diagnosis. Front Neurosci 17: 1160904. doi: 10.3389/fnins.2023.1160904 Li H, Yang Z (2023b) Vertical Nystagmus Recognition Based on Deep Learning. Sensors (Basel) 23. doi: 10.3390/s23031592 Lim EC, Park JH, Jeon HJ, Kim HJ, Lee HJ, Song CG, Hong SK (2019) Developing a Diagnostic Decision Support System for Benign Paroxysmal Positional Vertigo Using a Deep-Learning Model. J Clin Med 8. doi: 10.3390/jcm8050633 Lin SC, Lin MY, Kang BH, Lin YS, Liu YH, Yin CY, Lin PS, Lin CW (2023) Artificial Neural Network-Assisted Classification of Hearing Prognosis of Sudden Sensorineural Hearing Loss With Vertigo. IEEE J Transl Eng Health Med 11: 170-181. doi: 10.1109/JTEHM.2023.3242339 Lomis K, Jeffries P, Palatta A, Sage M, Sheikh J, Sheperis C, Whelan A (2021) Artificial Intelligence for Health Professions Educators. NAM Perspect 2021. doi: 10.31478/202109a Lu W, Li Z, Li Y, Li J, Chen Z, Feng Y, Wang H, Luo Q, Wang Y, Pan J, Gu L, Yu D, Zhang Y, Shi H, Yin S (2022) A Deep Learning Model for Three-Dimensional Nystagmus Detection and Its Preliminary Application. Front Neurosci 16: 930028. doi: 10.3389/fnins.2022.930028 McCarthy J, Feigenbaum EA (1990) In Memoriam: Arthur Samuel: Pioneer in Machine Learning. AI Magazine 11: 10. doi: 10.1609/aimag.v11i3.840 McCarthy J, Minsky ML, Rochester N, Shannon CE (1955) A Proposal for the Dartmouth Summer Research Project on Artificial Intelligence, August 31, 1955. Miettinen K, Juhola M (2010) Classification of otoneurological cases according to Bayesian probabilistic models. J Med Syst 34: 119-30. doi: 10.1007/s10916-008-9223-z Mouelhi A, Ben Slama A, Marrakchi J, Trabelsi H, Sayadi M, Labidi S (2021) Sparse classification of discriminant nystagmus features using combined video-oculography tests and pupil tracking for common vestibular disorder recognition. Comput Methods Biomech Biomed Engin 24: 400-418. doi: 10.1080/10255842.2020.1830972 Newman JL, Phillips JS, Cox SJ (2021a) 1D Convolutional Neural Networks for Detecting Nystagmus. IEEE J Biomed Health Inform 25: 1814-1823. doi: 10.1109/JBHI.2020.3025381 Newman JL, Phillips JS, Cox SJ (2021b) Detecting positional vertigo using an ensemble of 2D convolutional neural networks. Biomed Signal Process Control 68: 102708. doi: 10.1016/j.bspc.2021.102708 Rastall DP, Green K (2022) Deep learning in acute vertigo diagnosis. J Neurol Sci 443: 120454. doi: 10.1016/j.jns.2022.120454 Samuel AL (1959) Some Studies in Machine Learning Using the Game of Checkers. IBM Journal of Research and Development 3: 210-229. doi: 10.1147/rd.33.0210 Samuel AL (1967) Some Studies in Machine Learning Using the Game of Checkers. II—Recent Progress. IBM Journal of Research and Development 11: 601-617. doi: 10.1147/rd.116.0601 Siermala M, Juhola M, Kentala E (2008) Neural network classification of otoneurological data and its visualization. Comput Biol Med 38: 858-66. doi: 10.1016/j.compbiomed.2008.05.002 Sood A, Sangari A, Chen JY, Stoff BK (2022) The ethics of using biased artificial intelligence programs in the clinic. J Am Acad Dermatol 87: 935-936. doi: 10.1016/j.jaad.2021.11.031 Turing AM (1950) I.—Computing machinery and intelligence. Mind LIX: 433-460. doi: 10.1093/mind/LIX.236.433 Viikki K, Kentala E, Juhola M, Pyykko I (1999) Decision tree induction in the diagnosis of otoneurological diseases. Med Inform Internet Med 24: 277-89. doi: 10.1080/146392399298302 Viikki K, Kentala E, Juhola M, Pyykko I, Honkavaara P (2002) Generating decision trees from otoneurological data with a variable grouping method. J Med Syst 26: 415-25. doi: 10.1023/a:1016463032661 Weiss EA (1992) Biographies: Eloge: Arthur Lee Samuel (1901-90). IEEE Annals of the History of Computing 14: 55-69. doi: 10.1109/85.150082 Wu P, Liu X, Dai Q, Yu J, Zhao J, Yu F, Liu Y, Gao Y, Li H, Li W (2023) Diagnosing the benign paroxysmal positional vertigo via 1D and deep-learning composite model. J Neurol 270: 3800-3809. doi: 10.1007/s00415-023-11662-w Yamakawa H (2021) The whole brain architecture approach: Accelerating the development of artificial general intelligence by referring to the brain. Neural Netw 144: 478-495. doi: 10.1016/j.neunet.2021.09.004 Yiu YH, Aboulatta M, Raiser T, Ophey L, Flanagin VL, Zu Eulenburg P, Ahmadi SA (2019) DeepVOG: Open-source pupil segmentation and gaze estimation in neuroscience using deep learning. J Neurosci Methods 324: 108307. doi: 10.1016/j.jneumeth.2019.05.016 Page first published on October 6, 2023. Page last updated on November 28, 2025 |